We have been some busy bees here lately at thirty bees. Today we are adding three new modules to our growing list of core modules. By now most users know that we focus a great deal on performance at thirty bees. The new modules are no exception to that. They are managers for the most popular server side caching systems that thirty bees users use. The three modules are Memcache Manager, Opcache Manager, and APCu Cache Manager.

The new modules are not for increasing performance in your shop per se, they are to help you manage the caching system that you already use. They can let you know if you need to increase the memory dedicated to you caching system, which will speed your shop up if the memory flushes constantly. The modules can also let you know if your caches are working efficiently, or if they are restarting too much as well. One of my favorite uses for the modules is the ability to clear the Opcode caches easily, without having to restart PHP or other services.

Opcache Manager

Opcache is one of the caches that all thirty bees sites need to be using. It has been standard in PHP since version 5.6 and it improves with speed every version that is released. Opcache speeds your site up by storing the core PHP files, module files, and even the smarty cache files in a compiled state in memory. This state is called Op code. The benefits of using Opcache are two fold, on one side you totally cut out a disk read for executing a file on the other side the file is pre-compiled from PHP to Opcode. This is what makes Opcache work so well, disk reads are very slow and cost a lot of time. Using Opcache cuts them to almost nothing since all the PHP files are stored in a memory cache. This cache however is very small on most default PHP installations. It is just 32mb. We generally recommend upping that to either 64mb or 128mb. You can use our Opcache Manager to figure out exactly what size your cache needs to be set at.

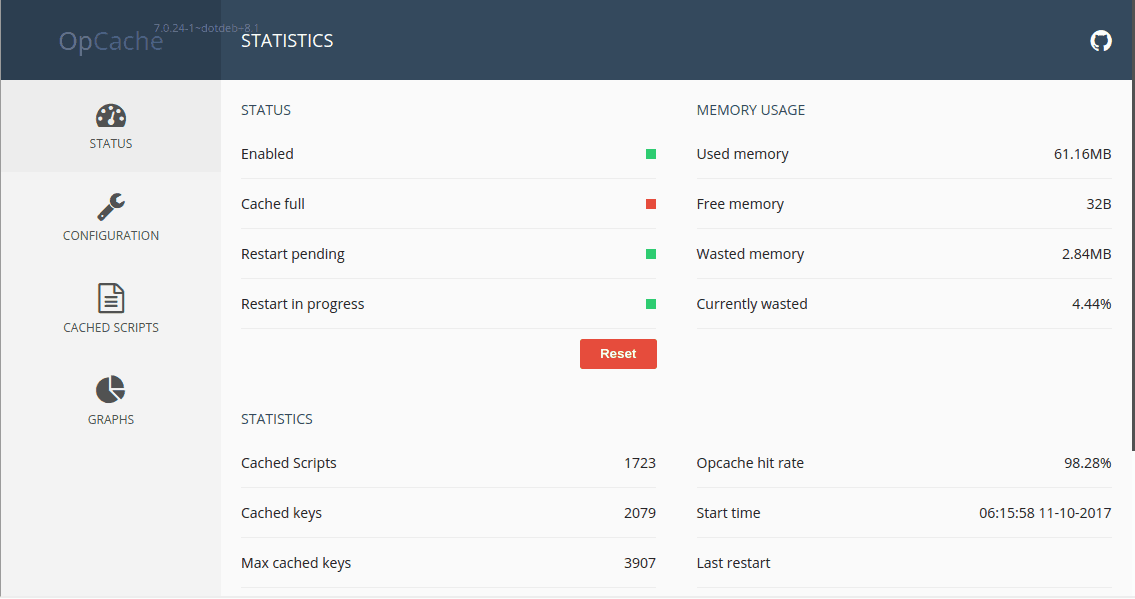

Below is an image of the main interface of the the module.

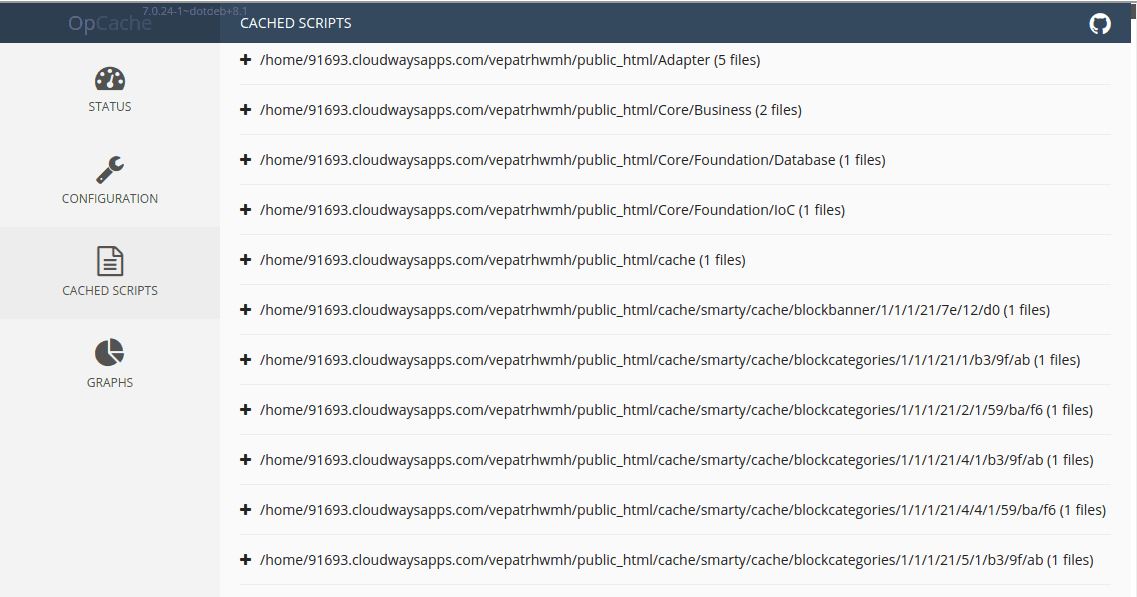

You can see we have our Opcache on our test server set to 64mb. If this was a production server, I would raise the Opcache to 128mb to see how it fills. What you have to take into consideration, is not only does the core store in the Opcache memory, so does what ever modules you are using. Also your Smarty cache files do as well (this is one huge reason we are really reluctant to move away from Smarty, Twig and other template language do not create and store Opcode and are slower.) Below you can see a screenshot of some of the different cached files, notice the Smarty files that are cached.

Out of all of the different caching systems, Opcache is the only one that is recommended to be ran with one of the other caches too. Both of the other caches, cache user information. Opcache caches general information, so it can be used with APCu or Memcache.

APCu Cache Manager

APCu is a branch from the APC Opcache project. When PHP 5.5 was announced APC was announced to be the Opcache included in 5.5. That was changed over time though and Zend Optimizer was the Opcache that was included. One thing that APC did that Zend did not do however, was cached user data to the Opcache. The official PHP Opcache extension does not do this either. So the developers of APC stopped working on APC and split into a different module called APCu. This module is meant to work with Opcache, but is only the user entry portion of the old APC module. Using APCu in conjunction with Opcache is the recommended fastest cache system for thirty bees.

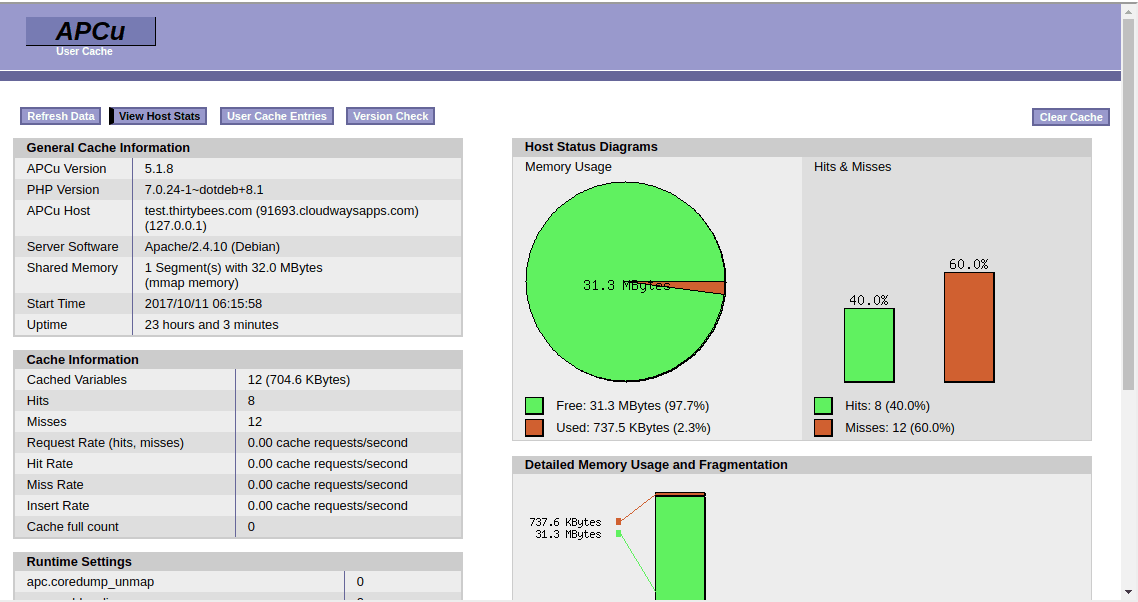

With APCu, the size of the cache does not need to be as large as the Opcache. Since it is just caching user entries, they are very small, less than one 1kb each. So you can get by with using 32mb for the cache size, that should be more than enough. The flushing does not generally matter with APCu either, since once a user leaves your site it does not matter if their cache entries are flushed. Below is a look at the cache interface for APCu.

Since this cache caches user information, there is generally a larger miss rate, since there is no way to warm this cache per specific user other than the user making the file request. Even though, it still is still the quickest combination to serve a thirty bees site.

Memcache Manager

Memcache is an old, but reliable caching method. It is admittedly slower than just about any other method, but it is faster than not using it. Personally, I have thought memcache was always misused most of the time, that is why the results are slower. Memcache, unlike APCu adds an extra http request when retrieving information from the cache. When you compare it to caching methods like APCu that serve directly from memory without the extra http request, it is a lot slower. Why have we included this slow cache? See memcache excels at something that APCu cannot do. You can share a front end caching instance across multiple front end servers. This is what memcache was really designed for. Having multiple front end servers, with one server set as a caching instance that all servers share. In a setup like this, memcache out performs APCu, but this is a setup not many users use.

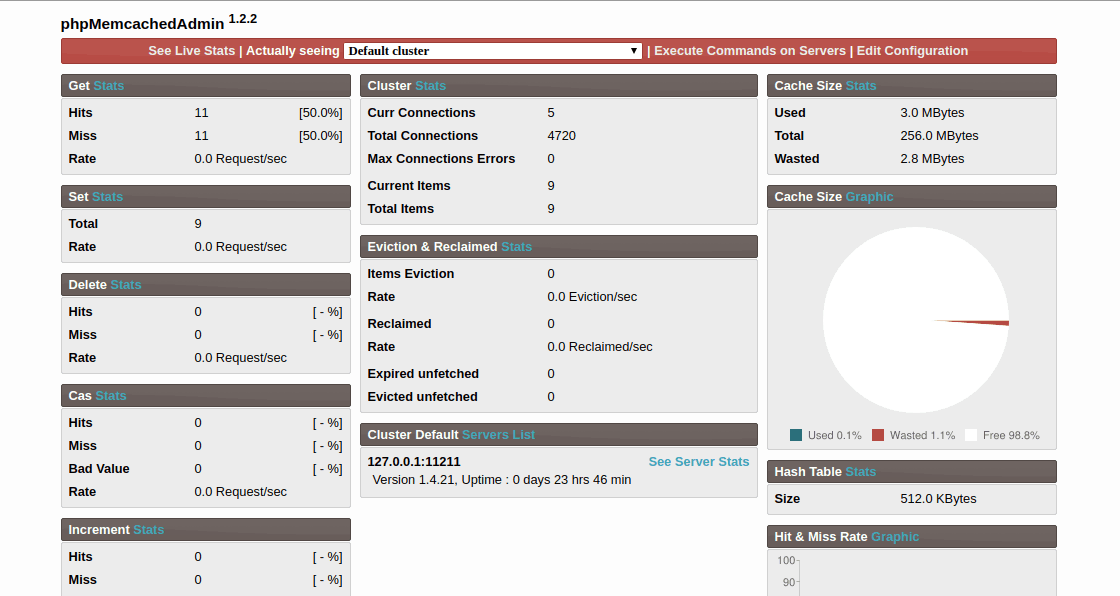

Our Memcache Manager does exactly like the other two modules, it lets you view the usage of your Memcache server. You can use it like the other modules to tune how much memory that you need to dedicate to Memcache. It is hard to estimate how much memory you will need. I would run Memcache a couple of days and check the memory usage and start from there. Below is an image of the interface that the module uses.

Speed is a big focus at thirty bees, that is why we are trying to give our users the tools they need to make the fastest possible shops, while at the same time teaching them how to use the tools. If you would like to check these modules out, use the download links below. If you have any questions about the modules, feel free to post a comment below.

Sounds really nice with these 3 new modules, but I must be missing something?

I have installed all 3 modules in 2 shops and in all cases the interface to the modules are not shown.

When I click “configure” for the modules it just shows “This page is not available”:

https://uploads.disquscdn.com/images/b996af696267749ffcf3f2515fd15da08c49aac367b40288e6e88c6c762bbdba.png

I have exactly the same issue

Are you using the latest versions of the modules? There was a build issue in the beginning.

I am using version 1.0.1 of all 3 modules. Are there newer versions?

There are not, can you try deleting the modules and installing the release from github.

I have a question on the Smarty sidenote. Why do you say Twig and other templating engines do not use OPcache? Twig compiles and caches templates to plain PHP code, just like Smarty does, and then they are obviously catched by OPcache.

From my understanding and testing, with Twig, the underlying code is cached, not the template itself. The php code before twig is compiled down, but the twig template files are not stored in opcache memory. That proves the case when you test templating language speed as well, See this article, https://gonzalo123.com/2011/01/17/php-template-engine-comparison/ and this github repo, https://github.com/jbroadway/template-bench and this answer to why twig is slower and not using opcache, https://stackoverflow.com/questions/9363215/pure-php-html-views-vs-template-engines-views

Then I have to say that, in my opinion, your understanding is wrong. Please let me say that I’m not really a Twig fan over Smarty, I consider both great tools for the job, but I do think knowledge is important, specially since I tested PrestaShop 1.7 and decided there’s no way I’m using it and am considering migrating to TB, which apparently you are maintaining, so here goes my answer.

On the first link you posted, which is from 2011, if you look at the comments you will be able to see that the author says “I’ve done all test without catching because I suppose when catching the performance will be the same with all template engines”. The github link is from 2012. Here’s an up-to-date benchmark: https://github.com/dominics/smarty-twig-benchmark. The last one from stackoverflow, also from 2012 and from a Smarty developer, talks about some points that have since been fixed in Twig.

Now a quick explanation on how Twig templates are compiled. Each one of them is converted to a plain PHP file that’s just a class with signature:

where ${hash} is, wait for it, a hash. This class’ main function is:

which basically consists of a bunch of echo printing the template’s HTML code and the template variables which are passed through $context. So because they are all just a plain PHP script and the entire template is echoed as strings, they are cached by OPcache.

Just as one last thing, I’d like to know if there’s somewhere current TB development plans can be seen, to know which parts are being worked on and if I can help somewhere. I’ve been migrating 2 modules I sell to PS 1.7 and it’s just not worth it, not to mention how bad the current default template is; they even removed Bootstrap, which you could count on in earlier versions. I checked the roadmap page and apparently the only planned things are translations and an OmniPay module, mostly because a lot of other things are already done, which looks promising. Good job.

Dang, I think you are right. I have been going with old information. I remember looking at Twig when it first came out and decided against learning it, it looks like it has changed a lot since then. Maybe there needs to be new tests of it vs smarty, that could be interesting to see how they fair against each other these days.

As far as a roadmap, we have yet to publish a full one. We are in the process of publishing them for modules in the module repo. The core is likely going to stay untouched for a while other than bug fixes and a couple new theme / webp features added. At the first of the year we are going to make a decision if we are going to redo the whole front end in a refactor. Its not going to be an overnight thing, likely a 6-8 month ordeal rewriting it. But until we make that decision a lot hangs in the air. After the new theme features we are going to freeze any new features in the 1.0.x branch though.

What area’s are you good at? What would you like to help with? Being open source we could always use more help.

I’m basically fullstack, generally leading teams and getting into whatever I see has the weaker developers working on it.

Lately I’ve been focusing in SQL profiling/optimization/restructuring and in converting a lot of Javascript code to TypeScript and out of 3rd party old libraries like jQuery to using vanilla JS features (which was fun but painful, and also involved a lot of converting JS animations to CSS ones). Also I’m very experienced at Drupal and Symfony development and have been tinkering with VueJS for fun.

So that’s why I asked if there’s some kind of coordination channel. I wouldn’t like to start working on something to find out it was being done or completely changed by another developer.

Send me your email to [email protected] and I will send you an invitation to our slack channel. That is where most of the people that do core work are at. Its a good place to coordinate.